Category: Machine Learning Security

-

AI Red Teaming: Breaking Your Models Before Attackers Do

How to stress-test, find, and fix the real vulnerabilities in your AI systems before someone else does. TL;DR AI red teaming is an adversarial, multidisciplinary practice that probes production and pre-production models to surface security, safety, privacy and misuse risks. It borrows from cyber red teams but expands to data, model artifacts, pre-trained components, prompt…

-

From DevSecOps to MLSecOps: Securing the AI Development Lifecycle

In recent years, organisations have matured their software-development practices through models like DevSecOps integrating security (“Sec”) into the development (Dev) + operations (Ops) lifecycle. Now, as artificial intelligence (AI) and machine-learning (ML) systems become core to business operations, a new discipline is emerging: MLSecOps (Machine Learning Security Operations). MLSecOps takes the DevSecOps ethos but extends…

-

ML Supply Chain Security: Protecting the Pipeline of Machine Learning

Machine Learning (ML) is the backbone of modern digital transformation, powering fraud detection, medical diagnostics, recommendation engines, and more. But with great adoption comes great risk. ML systems are not isolated models; they rely on a complex supply chain of data, frameworks, libraries, pre-trained models, APIs, and deployment pipelines. Each of these dependencies introduces security…

-

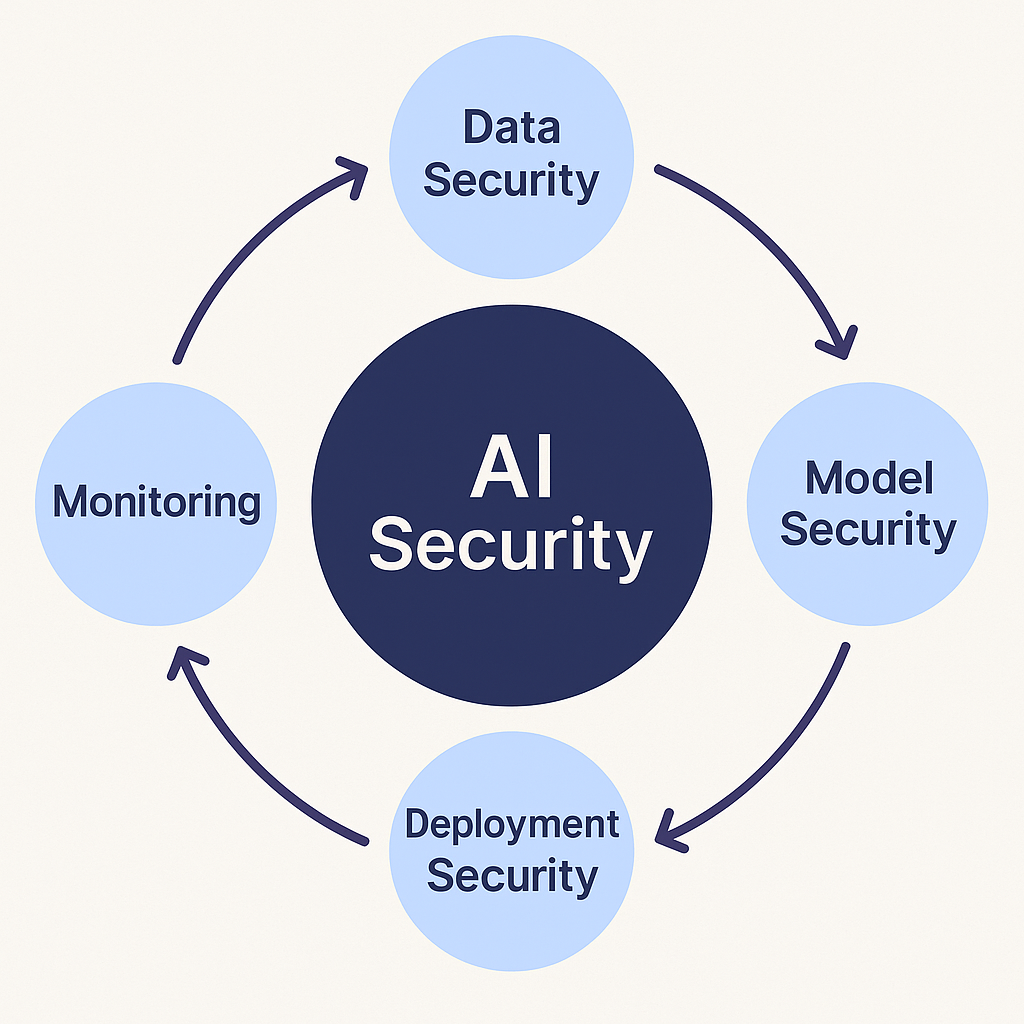

Security in AI: Safeguarding the Future of Intelligent Systems

Artificial Intelligence (AI) has become the backbone of modern innovation – powering chatbots, autonomous systems, medical diagnoses, financial predictions, and even cybersecurity defenses. But as AI grows in capability, it also introduces new attack surfaces and unique vulnerabilities that traditional security models fail to address. AI security is no longer optional; it is a strategic…