Tag: AI Security

-

From Attack Trees to Threat Models

Turning Adversarial Paths into Defensible Architecture Attack trees are where good security conversations begin. Threat models are where they become actionable. Most organizations stop too early. They build attack trees: Then they fail to convert them into system-enforced guarantees. This blog explains how to turn attack trees into formal threat models that directly influence cloud,…

-

The Ghost in the Firewall: Why Cloud, Kubernetes, and AI Attacks Bypass Traditional Security

For decades, firewalls were treated as the final authority on security. If traffic passed the firewall, it was trusted.If it didn’t, it was blocked. That mental model is now broken. Modern breaches increasingly happen without violating a single firewall rule. No port scans. No exploits. No IDS alerts. This is the era of the Ghost…

-

From DevSecOps to MLSecOps: Securing the AI Development Lifecycle

In recent years, organisations have matured their software-development practices through models like DevSecOps integrating security (“Sec”) into the development (Dev) + operations (Ops) lifecycle. Now, as artificial intelligence (AI) and machine-learning (ML) systems become core to business operations, a new discipline is emerging: MLSecOps (Machine Learning Security Operations). MLSecOps takes the DevSecOps ethos but extends…

-

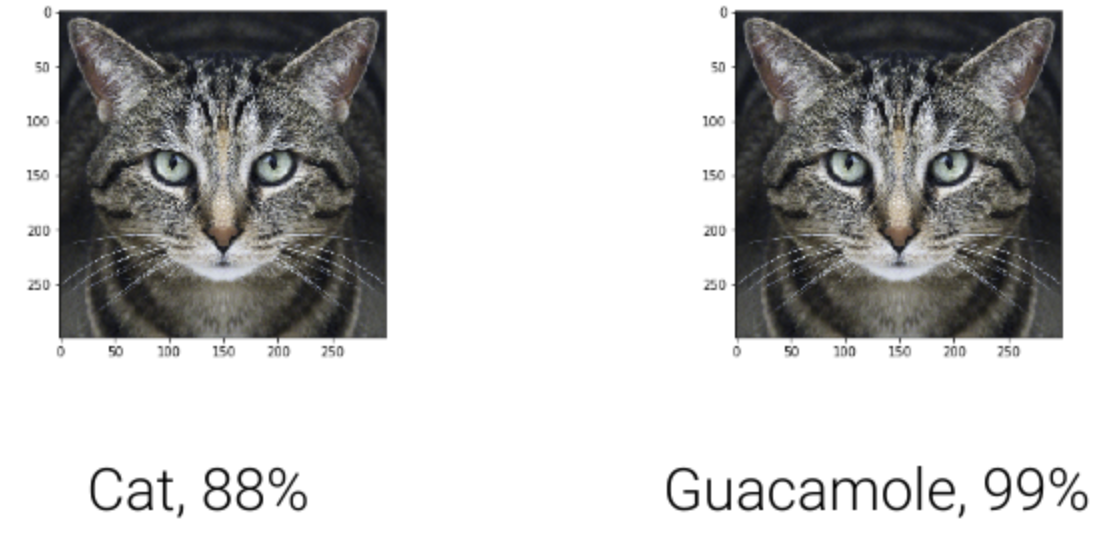

Adversarial AI in the Wild: Real-World Attack Scenarios and Defenses

AI is no longer just predicting clicks and classifying cats, it’s browsing the web, writing code, answering customer tickets, summarizing contracts, moving money, and controlling workflows through tools and APIs. That power makes AI systems an attractive, new attack surface often glued together with natural-language “guardrails” that can be talked around. This guide distills the…

-

Shadow AI: The Hidden Risk Lurking Inside Organizations

Artificial Intelligence (AI) has become the driving force behind innovation in enterprises optimizing operations, enabling predictive analytics, and enhancing decision-making. But with AI’s rapid adoption comes a dangerous byproduct: Shadow AI. Just as “shadow IT” once described unsanctioned apps and tools used without IT’s approval, Shadow AI refers to AI systems, models, and tools deployed…