Machine identity is quietly becoming the dominant identity problem on the internet. Not user logins. Not passwords. Not MFA. It’s services, workloads, agents, pipelines, and devices authenticating to other services, at cloud scale, across networks you don’t fully control, with lifetimes measured in seconds.

In that world, token exchange is more than an OAuth feature. It’s a foundational pattern for how machines will prove who they are, what they’re allowed to do, and under what constraints without shipping long-lived secrets everywhere.

This blog goes deep into token exchange: what it is, why it matters, how it works in modern systems, and how it shapes the future of machine identity (especially with agents and autonomous workflows).

1) The machine identity problem is different from human identity

Human identity systems optimize for:

- interactive logins

- MFA and recovery flows

- sessions lasting hours

- browser-based UX

- user consent and delegated permissions

Machine identity systems optimize for:

- non-interactive authentication

- zero/low trust networks

- high frequency calls (thousands/sec)

- short-lived credentials

- strong boundaries between services and environments

- auditable, least privilege decisions at runtime

Machine identities include:

- microservices and APIs

- Kubernetes pods / service accounts

- CI/CD jobs (GitHub Actions, GitLab CI, Jenkins)

- serverless functions

- data pipelines and ETL

- IoT devices

- AI agents/tools acting on behalf of users or systems

The hard truth: static secrets don’t scale here.

- leaked API keys are invisible until abused

- secret rotation is painful and slow

- secrets get copied into logs, env vars, containers, build artifacts

- a single compromised workload can become a “credential printing press”

The modern direction is: no long-lived secrets; use short-lived tokens and exchanges.

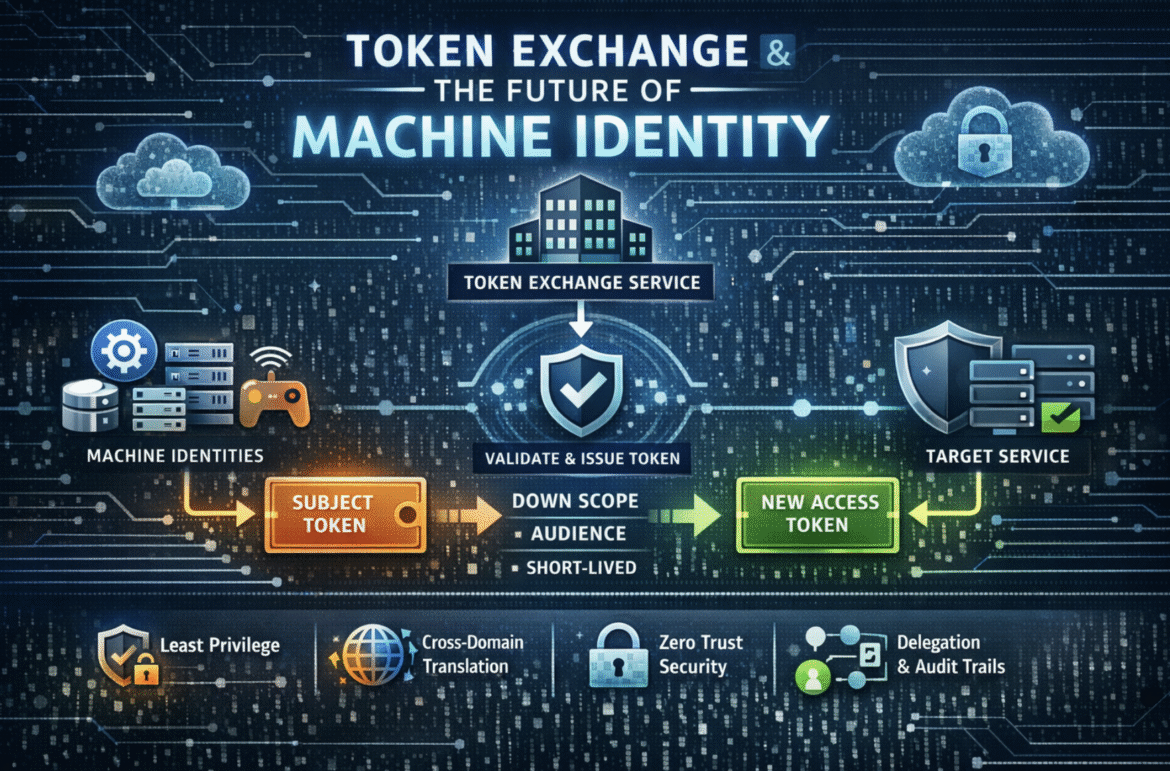

2) What “token exchange” actually means

Token exchange is the pattern where:

a caller presents one token (or credential) to a trusted authority and receives a new token for a different audience, scope, or context often with tighter permissions and shorter lifetime.

The canonical spec is OAuth 2.0 Token Exchange (RFC 8693), commonly used for:

- service-to-service delegation

- downscoping privileges

- cross-identity-domain translation (e.g., external identity → internal token)

- act-as / on-behalf-of flows

Key concepts (in plain language)

- Subject token: what you already have (e.g., workload identity token, user token, device token)

- Actor token: “the thing doing the calling” if different from the subject (useful in delegation)

- Token issuer / exchange service: the authority that validates the input and mints a new token

- Audience: where the new token is allowed to be used (target API/service)

- Scope / permissions: what the new token allows (prefer minimal)

- Lifetime: often short (minutes or less)

- Claims: structured facts inside the token (who, what, where, why, under which constraints)

Token exchange isn’t just about switching formats. It’s about changing trust boundaries safely.

3) Why token exchange is becoming core to machine identity

3.1 Machines need “just enough” permission at runtime

A CI job might need:

- access to one artifact bucket

- for ten minutes

- from one repo

- on one branch

- in one environment

Token exchange lets you do:

- downscoping: take a broad identity and mint a token with narrower rights

- audience restriction: token is useless anywhere except the target service

- context binding: token includes build/run metadata and policy decisions

3.2 Machines increasingly operate across domains

Examples:

- a partner calls your API using their IdP, but you need an internal token format/claims

- workloads in one cloud access resources in another cloud

- a user interacts with an app, an agent spins up tools across multiple internal services

Token exchange becomes the “identity router”:

- validate external/foreign identity

- map to internal principals

- enforce policy centrally

- mint a token your services understand

3.3 Zero Trust wants strong, continuous authorization

Zero Trust is not only “verify identity.” It’s “verify identity + device/workload + context + policy” continuously.

Token exchange is how you:

- keep tokens short-lived

- re-evaluate policy frequently

- apply dynamic constraints (IP, network zone, workload attestation, risk score)

- reduce blast radius from compromise

4) The anatomy of a token exchange flow (service-to-service)

Let’s walk a realistic example.

Scenario

A Kubernetes workload (payments-worker) needs to call risk-engine API.

- It should not have a long-lived API key

- It should not get a token valid for every internal service

- It should not get broad scopes

Flow

payments-workerstarts with a workload identity token (e.g., projected service account token or SPIFFE/SVID-derived assertion).- It calls an internal Token Exchange Service (could be your IdP/authorization server).

- The exchange service:

- validates the workload token (signature, issuer, audience, expiry)

- checks policy: is

payments-workerallowed to callrisk-engine? - optionally checks posture/attestation (node integrity, runtime constraints)

- It mints a new access token:

- audience =

risk-engine - scope =

risk:score:write(or even narrower) - expiry = 2–5 minutes

- includes claims: workload identity, namespace, environment, policy decision id

- audience =

payments-workercallsrisk-enginewith that new token.risk-enginevalidates:- signature (trust issuer)

- audience match

- expiry

- scope/claims

- optionally request-level policy checks (ABAC)

Result: no static secret, minimal permissions, narrow audience, strong traceability.

5) Delegation: “on-behalf-of” and “act-as” for machines + users

The next era isn’t only service-to-service. It’s delegation chains.

Example: user → API → downstream services

A user calls your API (frontend-api) which calls billing, shipping, notifications.

If you pass the user token everywhere:

- downstream services need to understand the user token format

- scopes explode

- auditing becomes messy

- you risk over-privilege

Instead:

frontend-apiexchanges the user token for a downstream token with:- audience =

billing - scope = minimal billing operation

- claims include user identity reference (sub), tenant, and “actor” = frontend-api

- audience =

Now every hop is:

- restricted to its destination

- tailored to the operation

- audit-friendly (“who initiated” vs “who acted”)

This becomes critical with AI agents, because you need to distinguish:

- the human who requested (“subject”)

- the agent/tool/service that executed (“actor”)

- the policy decision and constraints applied

Token exchange is the cleanest mechanism for that separation.

6) Token exchange as a policy enforcement point

A powerful mental model:

Token exchange is not only authentication plumbing; it’s an authorization control plane.

Why? Because the exchange service is where you can centralize:

- workload allowlists/denylists

- environment separation (dev/stage/prod)

- tenant isolation

- conditional access (network zone, device posture)

- time-of-day rules

- risk-based constraints

- approval/just-in-time elevation

- anomaly signals from runtime security

Instead of embedding complex logic into every service, you can:

- enforce coarse-grained policy at the exchange

- enforce fine-grained at the resource server

This pattern scales.

7) Designing tokens for machine identity: claims that matter

For machine identity, tokens should carry claims that help resource servers decide quickly and audit later.

Useful claims:

iss/aud/exp/iat(issuer, audience, expiration, issued at)sub(subject identity)act(actor identity / delegation)- workload attributes:

- cluster, namespace, service account

- workload name/version

- environment (prod/stage/dev)

- tenant/customer id

scpor permissions listjti(unique token id) for replay tracking- policy decision id / correlation id

- attestation or trust level indicator (careful with size & privacy)

Principles:

- audience-restricted always

- short TTL (minutes, not hours)

- avoid embedding excessive PII

- don’t overload tokens as databases; include only what is needed

8) Security tradeoffs and failure modes

Token exchange is powerful, but you must treat it as critical infrastructure.

8.1 Exchange service becomes a high-value target

If compromised, attacker can mint tokens.

Mitigations:

- isolate and harden it (dedicated network segment)

- protect signing keys with HSM/KMS

- strict mTLS + authentication for callers

- rate limiting and anomaly detection

- strong auditing of every exchange

- key rotation and key id management

- minimal admin access

8.2 Token theft is still possible (but damage is bounded)

Tokens can be stolen from memory, logs, proxies, or sidecars.

Mitigations:

- short TTL (primary)

- audience restriction (primary)

- sender-constrained tokens (mTLS / DPoP) where feasible

- avoid logging headers; scrub telemetry

- encrypt in transit always; mTLS service mesh helps

8.3 Overly broad scopes undo the whole point

If you mint “god tokens,” you’re just replacing API keys with JWT-shaped API keys.

Mitigations:

- enforce downscoping as a rule

- limit max scopes per service

- policy checks at exchange + resource server

- automated tests for token scope correctness

8.4 Token bloat and latency

Large JWTs can be slow and hit header limits.

Mitigations:

- keep claims lean

- use reference tokens in some cases (opaque tokens with introspection)

- cache policy decisions carefully (but watch revocation/TTL)

9) Token exchange patterns you’ll see everywhere soon

Pattern A: Workload identity → internal service token

- Kubernetes service account token / SPIFFE SVID → access token for

payments-api - Common in service meshes and cloud-native architectures

Pattern B: External partner token → internal token

- Validate partner’s JWT, map to internal principal, mint internal token

- Enables clean boundary control

Pattern C: CI/CD identity → cloud/resource token

- Pipeline runner proves repo/run context → gets short-lived token for artifact registry, infra provisioning

- Reduces secret sprawl in pipelines

Pattern D: Agent delegation chain

- User request → agent token → tool tokens for specific APIs

- Each tool call gets a purpose-bound token minted just-in-time

This last one is likely to define the future.

10) The future: machine identity in an agentic world

As AI agents become operational actors, identity must answer new questions:

- Who initiated the action?

- What tool executed it?

- Was the tool allowed to do that for this user in this tenant?

- Under which constraints (time window, data scope, environment)?

- Can we replay and audit the chain of custody?

Token exchange supports this naturally:

- separate subject (human) from actor (agent/tool)

- enforce policy at every hop

- mint purpose-bound tokens per tool/action

- produce clean audit trails: request → exchange decision → token id → API call

In other words, token exchange becomes the backbone for:

- delegated authority

- fine-grained capability distribution

- provable accountability

We’re moving from “identity as login” to “identity as programmable trust.”

11) Practical implementation guidance (what to build next)

If you’re designing a modern machine identity system, prioritize this roadmap:

Step 1: Remove long-lived secrets where possible

- stop embedding API keys in services and pipelines

- measure secret sprawl (repos, CI vars, vault usage)

Step 2: Standardize a token issuer and trust model

- define issuers per environment (dev/stage/prod)

- enforce audience restrictions

- publish JWKS and rotation strategy

Step 3: Put token exchange behind strict controls

- authenticate callers strongly (mTLS, workload identity)

- apply policy checks (allowlists, ABAC)

- audit every exchange request/response metadata (not token content)

Step 4: Enforce short TTL + downscoping

- set hard TTL caps by token type

- reject overly broad scopes

- implement “scope reduction only” rules when exchanging from user tokens

Step 5: Add sender constraints where it matters

- mTLS-bound tokens for high-risk internal services

- DPoP for public clients where feasible

Step 6: Operationalize observability and incident response

- token exchange logs with correlation ids

- detect unusual exchange patterns

- quick key rotation playbooks

Conclusion

Token exchange is not just OAuth plumbing. It’s a strategic primitive for modern security:

- it reduces secret sprawl

- it enables least privilege at runtime

- it supports delegation chains and auditability

- it scales across clouds, partners, and microservices

- it’s perfectly aligned with Zero Trust

- and it will be essential for agent-driven systems where machines act with delegated authority

The future of machine identity isn’t “store better secrets.”

It’s “mint the right trust, at the right time, for the right destination, with the right constraints.”

That’s token exchange.

Leave a Reply